So in my wall example, you might have 2 or 3 4k textures vs literally hundreds for all the bricks, grout, defects, chipped faces, etc of a movie quality wall.

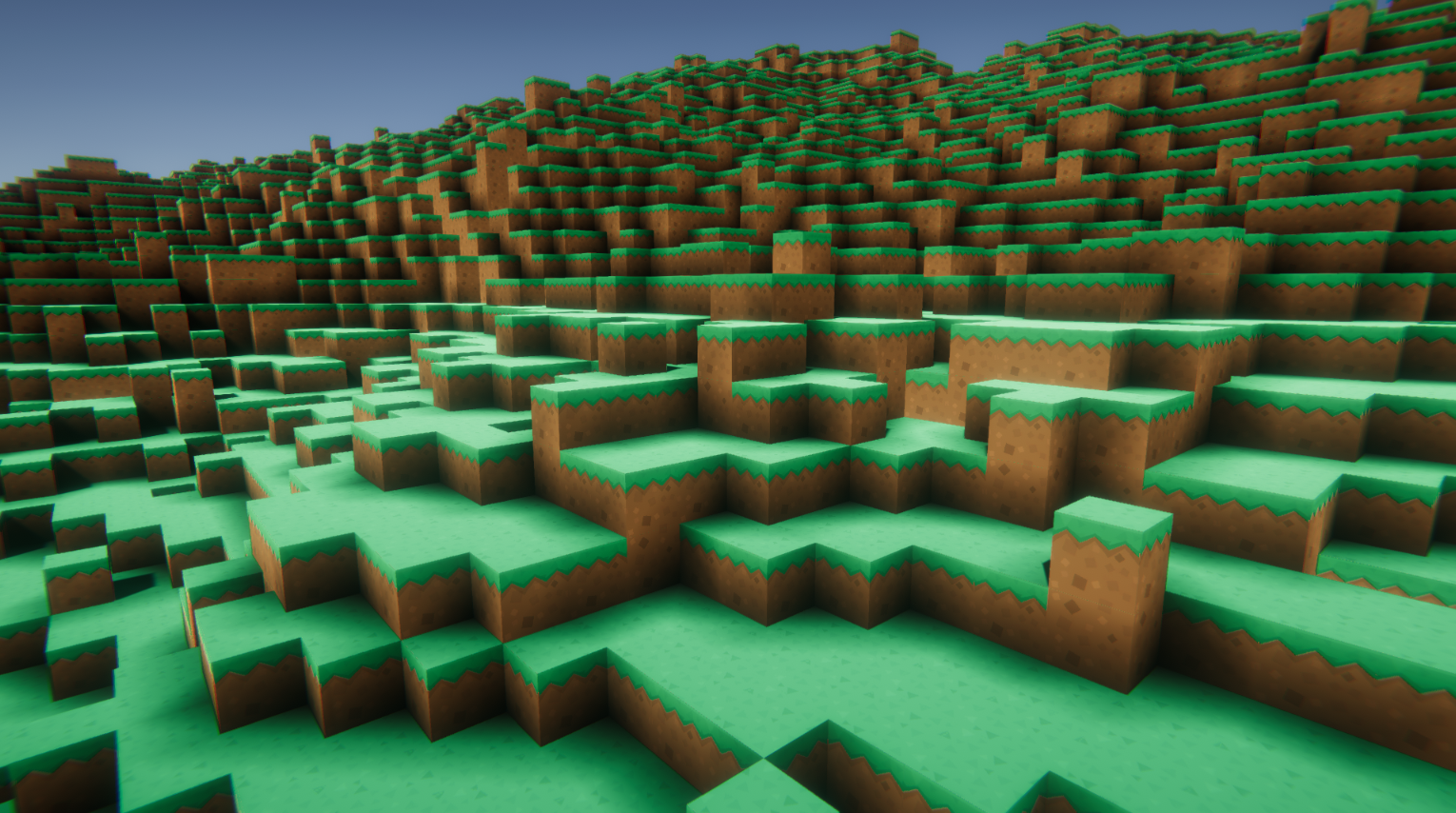

Every little bit of that detail adds more data you have to track. Now multiply that level of detail across every rendered thing in the scene, because the director may want to reframe the shot, or you need realistic lighting to sell that a rendered thing is integrated with filmed footage and 'real'. Whereas in the movie, the wall is more likely to be made of a handful of individually modeled bricks with much more detailed surface textures because maybe the director wants the camera to be really close to the surface of that wall and pull out to a wider shot, so that means the individual brick you start zoomed in on might have a 4k texture for itself alone, whereas the entire wall in the video game could easily be a single 4k texture since the player doesn't get close enough to notice the missing detail. The one for the game is probably going to be a plane with a couple textures on it because the wall is something that's a background element that the player isn't going to be up close with. Imagine a brick wall rendered for a video game vs one rendered for a movie.

In a movie, a single element of a scene might have as many polygons as an entire character in a video game, and its because of the differences in how you 'film' them. Its more to do with the movies using higher fidelity assets than you'd typically use for a game, which is what GPUs are made for.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed